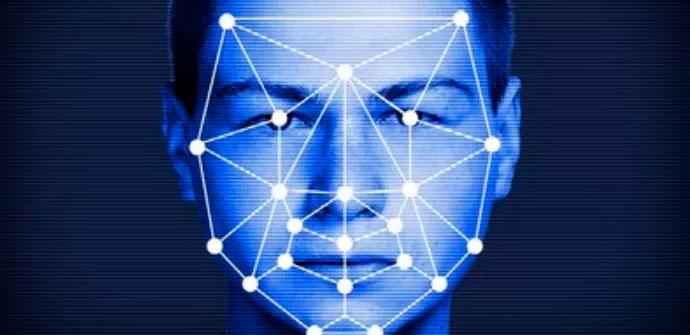

Privacy is a very important factor for users. Unfortunately, our data and information can be compromised in very different ways. Marketing companies may try to collect data to include us in spam lists, for example. Also, the platforms and services that we use may misuse it. Something that is present is the facial recognition that some social networks use. This is something that has generated controversy at times. In this article, we echo a new technique that keeps photos on the Internet safe from facial recognition algorithms.

A new technique protects photos from facial recognition

Today a computer algorithm is capable of scanning and analyzing thousands of photos in a matter of seconds. Surely on some occasion, we have seen how Facebook recommends us to tag someone in a photo by recognizing that person. This is something that is present in different social networks and Internet services.

Many users believe that this is a privacy problem. After all, their image is available in databases to be able to recognize them. And we must also take into account that the images are very present in our day to day. There are many platforms that we can use where we store information both in text and in images.

Social networks like Facebook and Instagram can automatically tag a user in photos. Other services like Google Photos can group your photos through the people present in those images using Google’s recognition technology.

Now a group of computer security researchers at the National University of Singapore have developed a technique that protects confidential information in photos by making subtle changes that are almost imperceptible to humans, but make selected features undetectable by known algorithms.

Modify an image without the human eye detecting it

In order to trick a machine, make that facial recognition algorithm not work, we should noticeably modify a photo. This means that this image could be ruined and really lose its essence. To overcome this limitation, the research team developed a “human sensitivity map” that quantifies how humans react to visual distortion in different parts of an image in a wide variety of scenes.

This development process began with a study with 234 participants and a set of 860 images. Participants were shown two copies of the same image and had to choose the copy that was visually distorted. In this way, they detected what human sensitivity is like, including factors such as lighting, texture, how objects are perceived or semantics.

The objective of this project that has taken six months to carry out is that users can use the tool to slightly change those images, enough for an algorithm to detect that it is different but not so much that the human eye finds differences.

The idea is that users use this tool before uploading images to social networks, for example. We already know the importance of privacy. We leave you an article where we talk about how to erase the trail on social networks.