- Index hide

They will improve the visual search tool in Google.

Users will be able to mix text and images in their queries.

Pilot test will begin, for the moment, only in the United States.

Thanks to the new Unique Multitasking Model (MUM), now the AI-powered algorithm will make it easier for users to search online, giving more precise answers with the improved system of Google Lens.

According to Google, Internet users will soon be able to connect different pieces of information and give the search engine written and visual tools that limit the search to get answers much more focused on what they need.

At the beginning of the year, during a Google I / O developer event, the company announced the implementation of its new Artificial Intelligence system to improve its products; However, it now elaborates on the Lens factors that would revolutionize visual search for digital marketing.

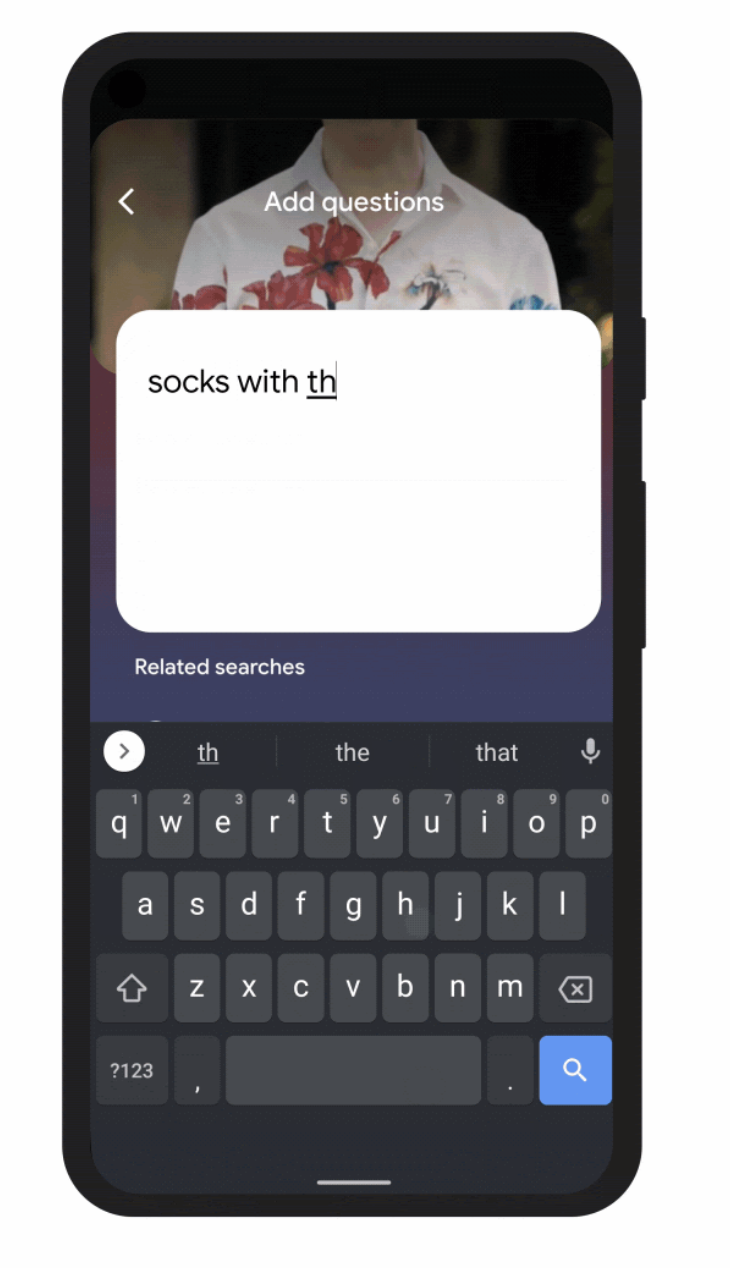

“This helps when you are looking for something that might be difficult to accurately describe in words alone, you can write: ‘Victorian stockings with white flowers’, but you may not find the exact pattern you are looking for. By combining images and text in a single query, we make it easier to visually search and express your questions in a more natural way, ”explains Prabhakar Raghavan, CEO of Google.

How to search from the smartphone?

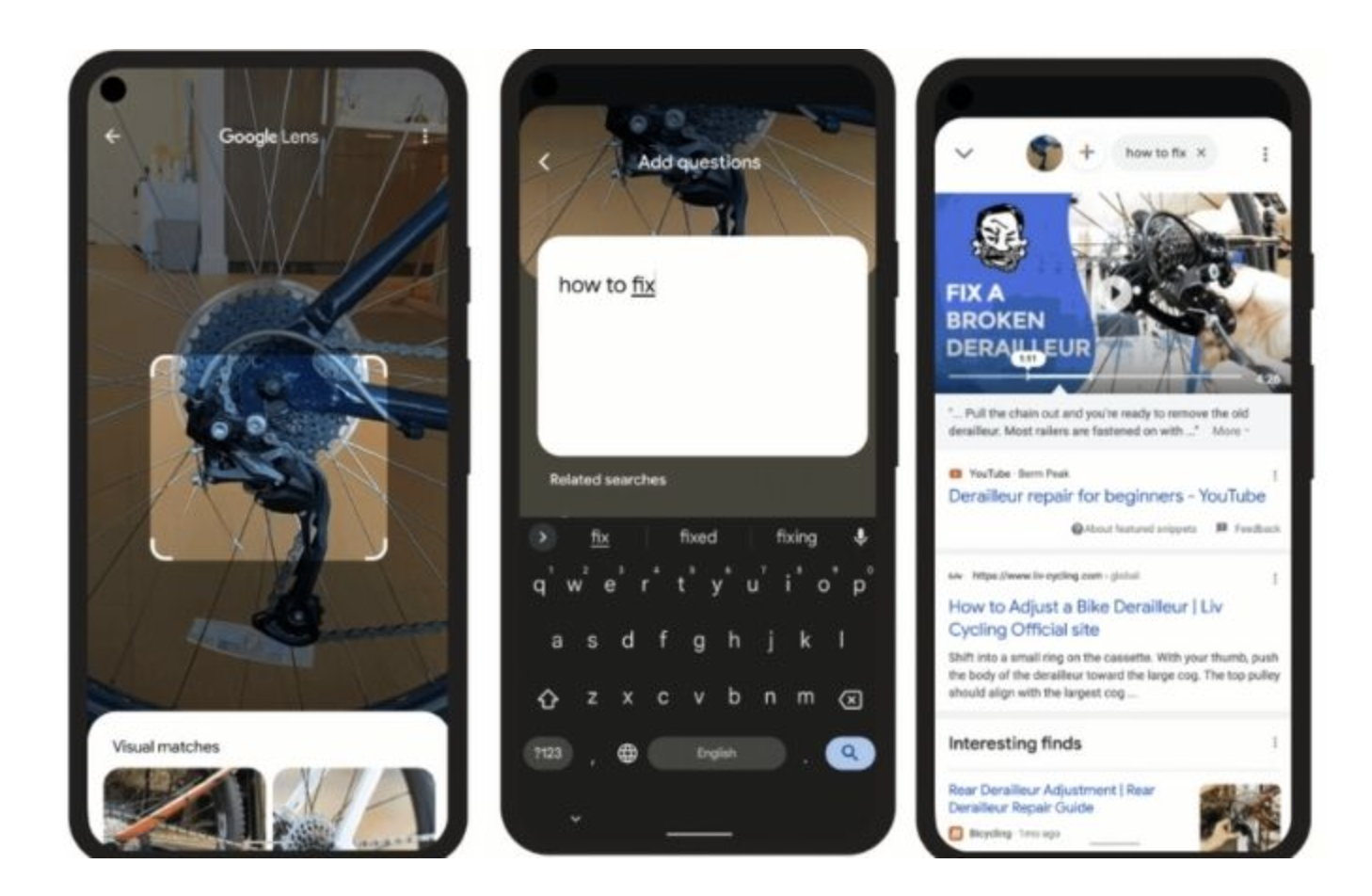

Google explains that the user of the app will not only have the option of taking a picture and looking for similar objects or places among a huge offer on the world wide web, since the new implementation allows writing from a word to a sentence, narrowing down the results.

A basic example that Google provides us is the search for solutions for a flat tire, just take a picture of the fault, write the word “repair” and wait for the search engine to do its thing. With MUM it is expected that in this case the Internet user will receive links to images, forums, videos and exact tutorials on tire repair.

How will you contribute to online marketing?

The new Google Lens experience will also help to find specific products much easier, for example, if you search for “short jackets” Google will show you a visual feed of these garments in different colors and styles, along with all the information on the stores and shops. online that offer them.

Among the many improvements that the company is making with MUM, a new filter called “stock” was also announced, which determines in search engines whether the online store being visited has stocks of the specific product required.

This type of digital inventory is intended to make the “shopper” experience closer and more personal with the brands, just as if they were buying physically.

However, this feature will launch first in the United States and select English-speaking markets, including the United Kingdom, Canada, and Australia, but is expected to expand rapidly to more countries in the near future.

Finally, iOS users in the United States will be the first to see a new button in the Google Lens application, there it will allow them to search for all the images on a page through the tool.

Also comes to Chrome

The new smart visual search feature is expected to appear soon on the desktop version of Chrome. By simply right clicking on an image and choosing “Search with Google Lens”, the page will display the cropping tool so that it can be projected and redirected to various pages with specific results in a sidebar.

So, while the original Google.com search tries to find similar images of what you are looking for, this new factor will allow you to identify objects, people, text, equations, animals, landmarks, products, brands and more.

Now read:

Google Maps has a new strategy: create the street food route

Good bye home office! Google buys this gigantic building in New York

Google is one step away from entering the free streaming market (like Pluto TV)