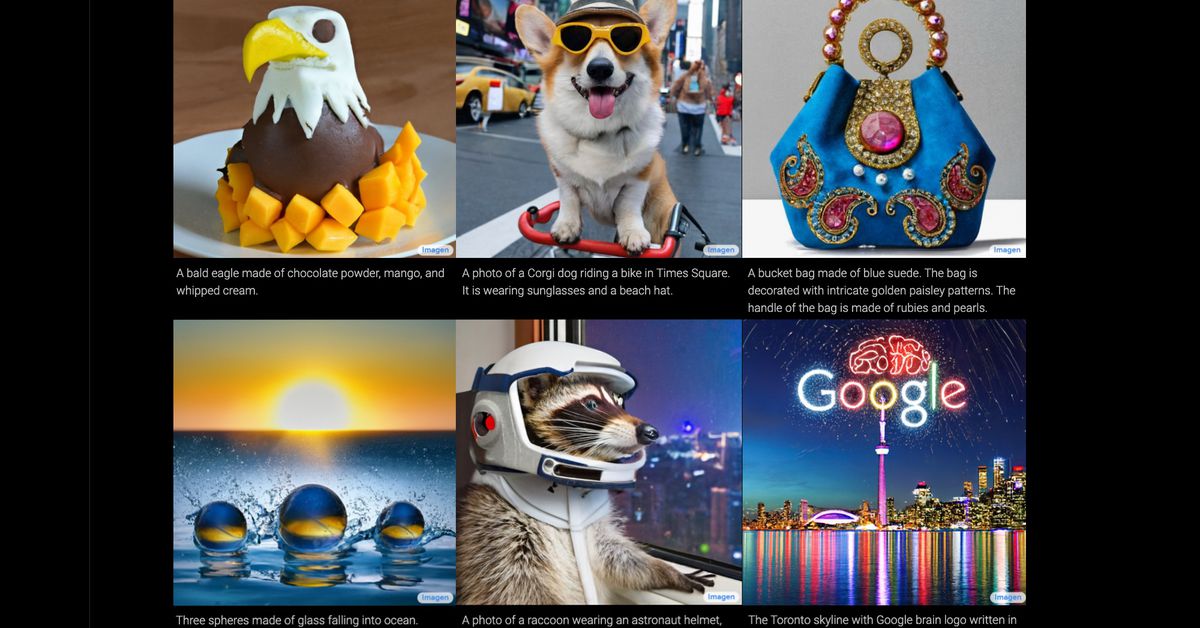

One of Google’s new services is the AI that converts any text that we propose, however crazy it may be, into an image. We tell you how it works in this note!

In recent months it has become quite an interesting trend Artificial Intelligences that can generate an image based on a text, and Google did not want to be left behind. This is why she premiered her own AI. Initially, one of the pages that became best known was Wombo.arpage that allows us to write any phrase or word and converts it into a drawing made in the art style of our preference.

However, there are other types of AI that generate images based on what we write. These are the ones that create the image of what we want. For example, if we want a picture of a dog wearing an astronaut helmet, we write it on the platform of each AI and it will give us the image in a matter of seconds. One of the most recognized is DALL-Ecreated by the laboratory OpenAI and that it received an update in April of this year.

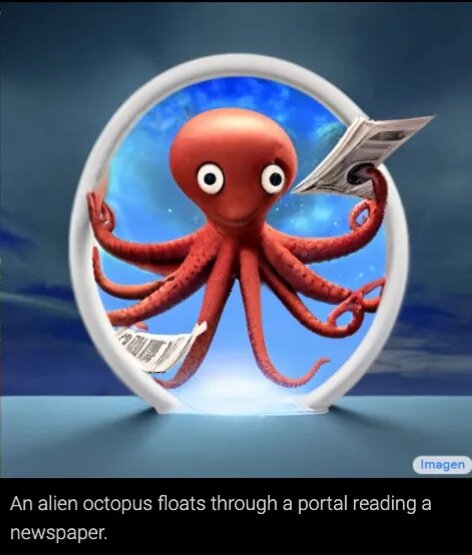

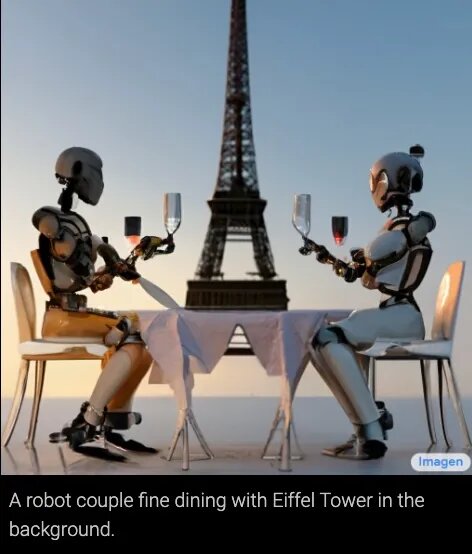

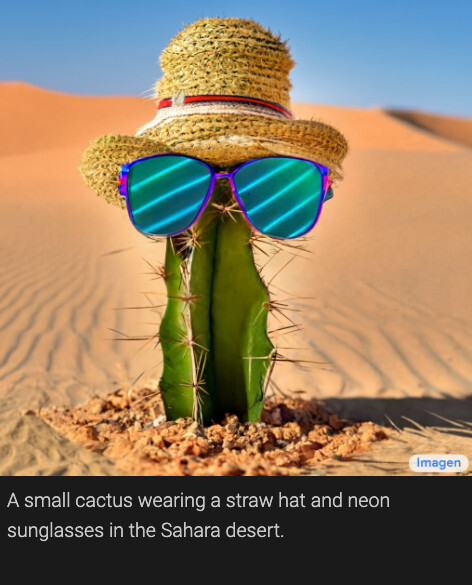

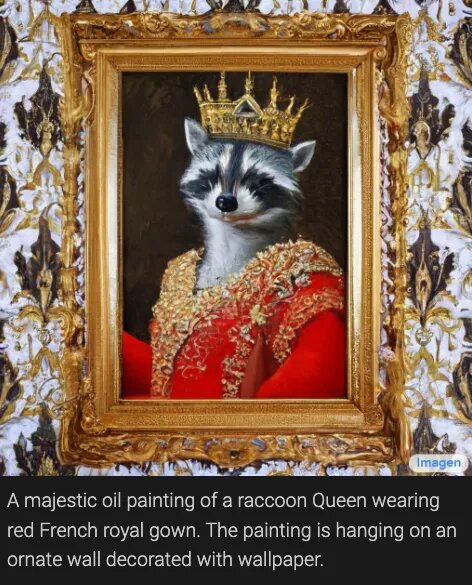

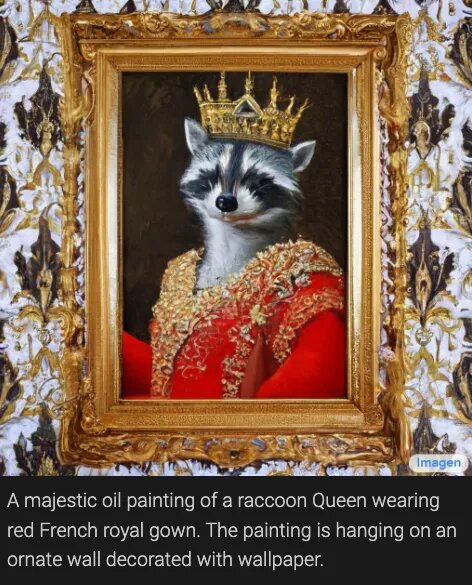

Now Google wants to join this fashion and presented its own Artificial Intelligence called Image, which seems to be a little superior to DALL-E. Image uses the text we want to transform and literally gives us what we ask for in the form of a photo. For example, in the photo above the phrase was “an alien octopus floating through a portal while reading a newspaper”. And the AI from Google He literally gave us that.

These images may be impressive and quite accurate, you have to consider that the images that were presented are only the ones that turned out best and were created under the watchful eye (and perhaps manipulation) of their creators. When a new model is released AIThe team of Google Brain tends to pick the best results to leave people wanting more. While all of these photos are of high quality, not all of the photos created may come out at this resolution.

What often happens with images created by text-to-image models often look unfinished, blurry, or smudged. This happened with some of the images generated by DALL-E from OpenAI you can have tendency not to fully understand the sentence offered.

According to Googlehis AI is capable of producing much more consistent images than the DALLE-2. This is according to the new benchmark that Google I create for this project, called drawbench. The good thing is that it is not a particularly complex metric: it has a list of 200 indications of text that the team Google introduced in Image and other text-to-image generators. Then, each result that each AI he was judged by some human evaluator.

Despite this, we cannot experiment with Image since Google did not throw the AI for the public. The main reason the company decided to do is quite interesting. If we all have access to a technology that allows us to create images with a simple phrase, and more if we can put anything, Image can be used to create, for example, fake news.

As for how this was trained AI, Google He explained that a lot of information was incorporated into it. Mainly, images of Internet have a description. However, those in charge behind Image also commented that they had to put a filter since as the AI It feeds on all the images it raises from Internet that have a description, images could be found that fostered stereotypes or discrimination.

Although in the report Google does not explain problems well Imogeneif you indicate that the model “encodes various social biases and stereotypes, including a general bias towards generating images of people with lighter skin tones and a tendency for images portraying different professionals to align with Western gender stereotypes.”

But this is not only a problem of the Google AI, as DALLA-E also have this problem. If you are asked to DALLA-E that generates images of a flight attendant, for example, most of the images that we will receive will be of a woman. If we put the word CEO we will receive, to no one’s surprise, many images of the typical white-skinned successful man.

These are the main reasons why these types of AI They are not available to the general public. DALLA-E neither does it offer its services to everyone, although it does offer them to some testers Beta. The betahowever, filters some texts that are written and will not generate images of racist, sexual or violent phrases.

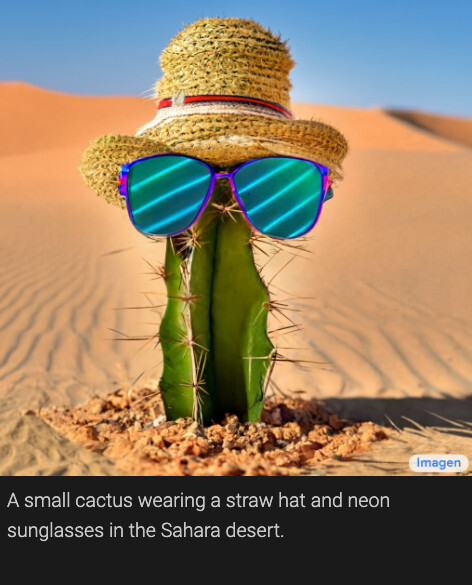

Probably at some point we will be able to access this type of AI. Googlefor his part, confirmed that it is not in his plans that it be released for public access Image for now. The company also says it plans to develop a new way to compare “social and cultural bias in future work” and test future iterations. For the moment, we will have to settle for the images he took Google. Although we are not complaining since we have a cactus with a hat and glasses and a raccoon queen.