ImageBind is the new Artificial Intelligence model developed by Meta, Combine different senses to create a multi-sensory experience. With its latest development, the technology company intends to continue its focus on research and development of these tools.

Meta ImageBind is a new AI model that manages to link all human senses, translated into six types of information: text, image/video, audio, depth, thermal and inertial units of measurement. By combining them, the tool can provide an immersive multi-sensory experience that links information and creates a comprehensive understanding of the environment.

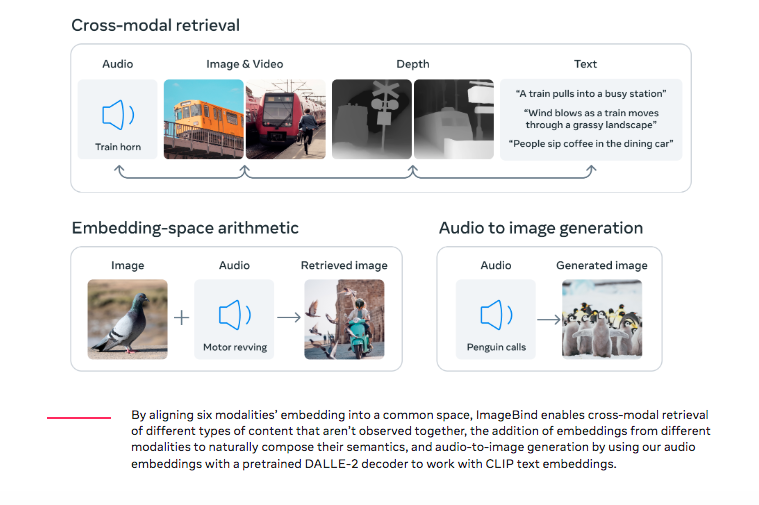

“You can even upgrade existing AI models to support input from any of the six modalities, allowing audio-based search, cross-modal search, multi-modal arithmetic and cross-modal generation”. points out Goal.

But the company does not want to limit itself, as it affirms that at some point they will be able to link more senses, such as touch, speech, smell, and fMRI signals from the brain.

MetaImageBind it can help build multi-modal AI systems that learn from all kinds of data around them. As the number of modalities increases, it opens up new possibilities for developing complete systems, such as combining 3D sensors and IMUs to design immersive virtual worlds.

It can also provide a way to explore memories. With the ability to search images, videos, audio files, or text messages using a combination of text, audio, and image, Meta ImageBind could revolutionize the way we search and explore our memories.